2025-Group 16

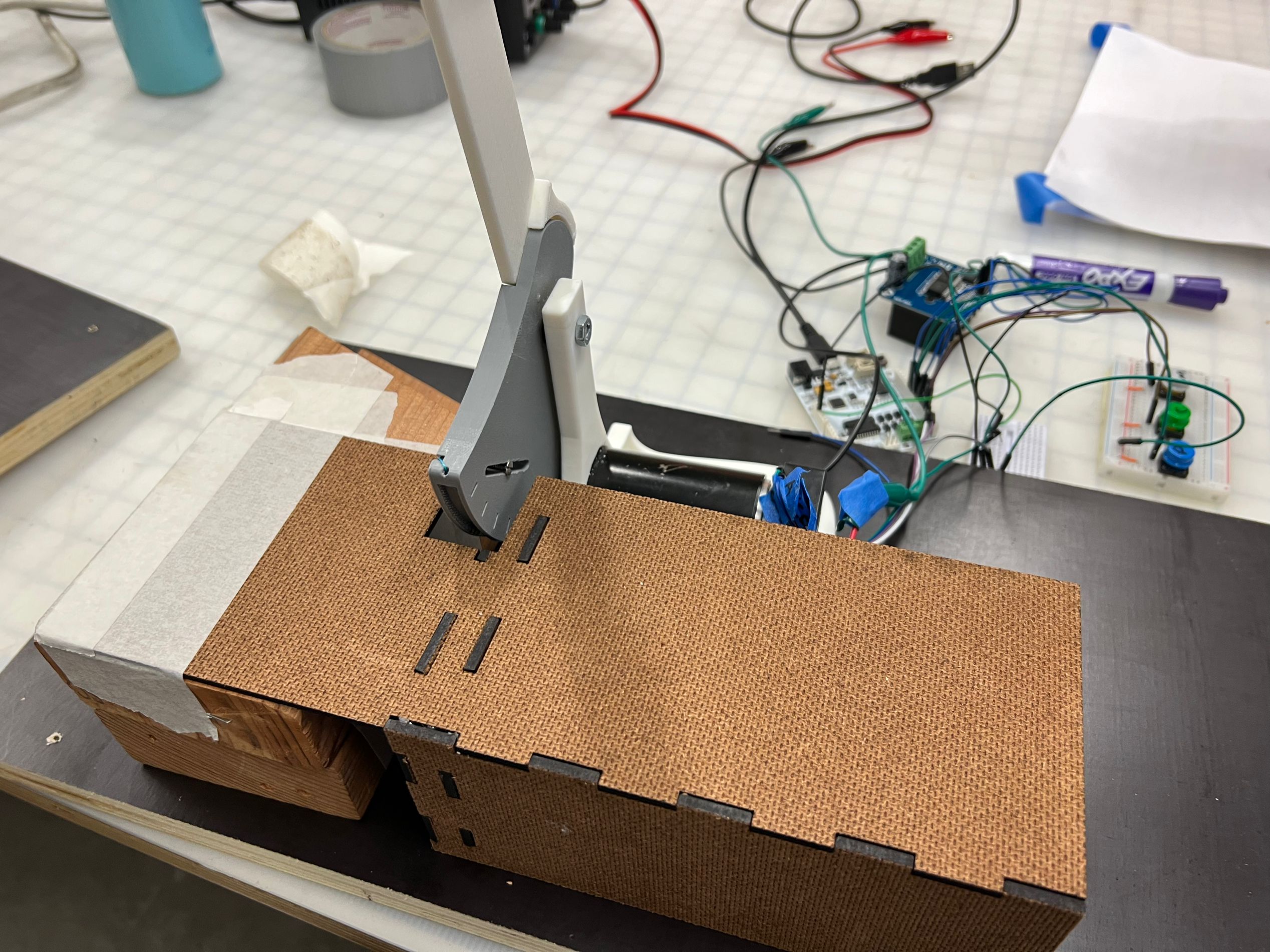

Haptic knife and particle jamming in use,

with VR Visualization of the demo “carrot”

Haptic Cooking Knife

Project team member(s): Miggy Silva, Tianyu Tu, Yuhao Huai, Venkata Brahmini Priya Pragada

Our project works on a device that allows users to feel the haptic experience of cutting ingredients. The device will read the position and speed of the handle to simulate the cutting experience of the kitchen knife. We provide users with mango, carrot and green pepper for the experience. The goal of this project is to enable users to experience the ingredient cutting process under all-round simulation. Finally, we showed our equipment in ME 327 Open House, where many users experienced our equipment and gave positive feedback.

Team photo.

On this page... (hide)

Introduction

This project aims to design and implement a haptic interface that simulates the cutting process of various cooking ingredients. The system aims to replicate the mechanical behavior of soft and semi-rigid materials (e.g., mango, carrot, bell pepper) through kinesthetic feedback, using a stylized knife interface connected to a programmable haptic device and a variable stiffness system to simulate the holding perception of the material. By exploring the mechanical and perceptual aspects of cutting interactions, we hope to contribute to both haptic system design and human-in-the-loop simulation research.

This project has a low infrastructure barrier but offers rich opportunities for learning and extension, including force modeling, material rendering, and visual-haptic illusions. It aligns well with our team’s interests and backgrounds, making it an effective hands-on exercise to deepen our understanding of haptic systems while strengthening skills in design, control, and testing.

Background

Recent work on simulating and analyzing cutting interactions has laid the groundwork for rendering realistic haptic feedback in virtual or physical interfaces. Mahvash and Hayward’s “Haptic Rendering of Cutting: A Fracture Mechanics Approach” proposes a physics-based model of knife–material interaction using fracture mechanics principles. They categorize cutting into three stages, elastic deformation, rupture, and steady-state cutting, and derive cutting force as a function of material fracture toughness. By isolating energy contributions from crack propagation and tool friction, their model enables efficient, realistic force feedback without full deformation simulation. This approach is directly applicable to our system, allowing us to define food types by their fracture toughness and render transitions in resistance as users "cut" through a virtual material. [1]

Nakazato and Kano’s “Proposal for a Virtual Reality System to Practice Knife Handling in Cooking” focuses on a VR-based training simulator for knife skills. They simulate cutting different vegetables by correlating CT scan density data with resistance feedback delivered through a haptic device. While their emphasis is on culinary education rather than realism, their method of varying force based on spatial density provides a useful paradigm for mapping internal structure to tactile feedback, particularly relevant for layered or fibrous foods like onion or okra. Their system also suggests incorporating scoring or feedback metrics into user interaction to enhance training effectiveness.[2]

Finally, in “Leveraging Multimodal Haptic Sensory Data for Robust Cutting”, researchers from Carnegie Mellon collected force and vibration data from a robot slicing 25 food types to train neural networks for material classification. While their focus is on robotic perception and not haptic rendering, their segmentation of food items into categories (e.g., soft, hard-skin, hard) and characterization of cutting phases (engagement, slicing, exit) are informative. These observed patterns help define force profiles for various foods, guiding our parameterization of resistance and force transients in the rendering engine, even without a learning component. [3]

Methods

Hardware Design and Implementation

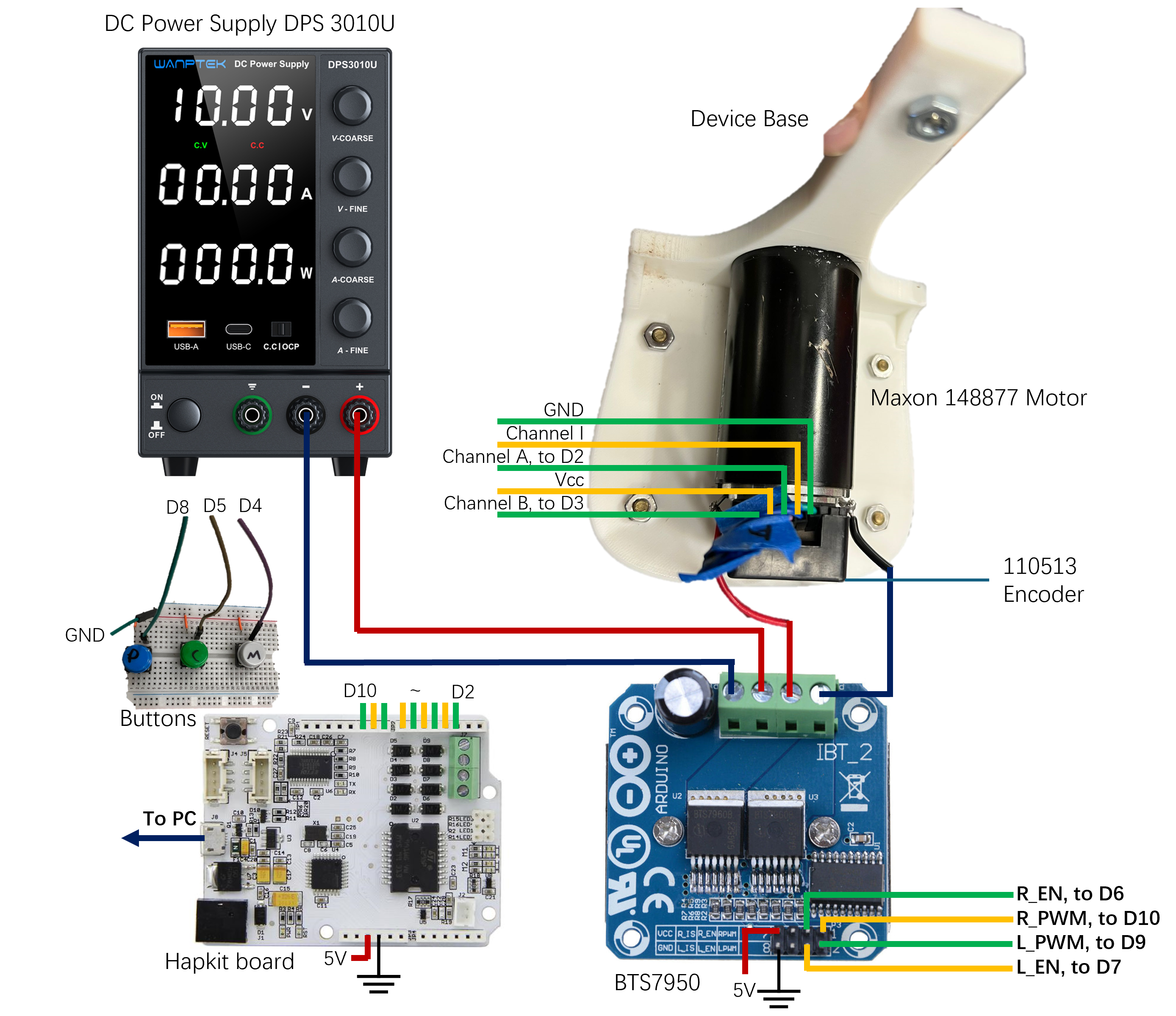

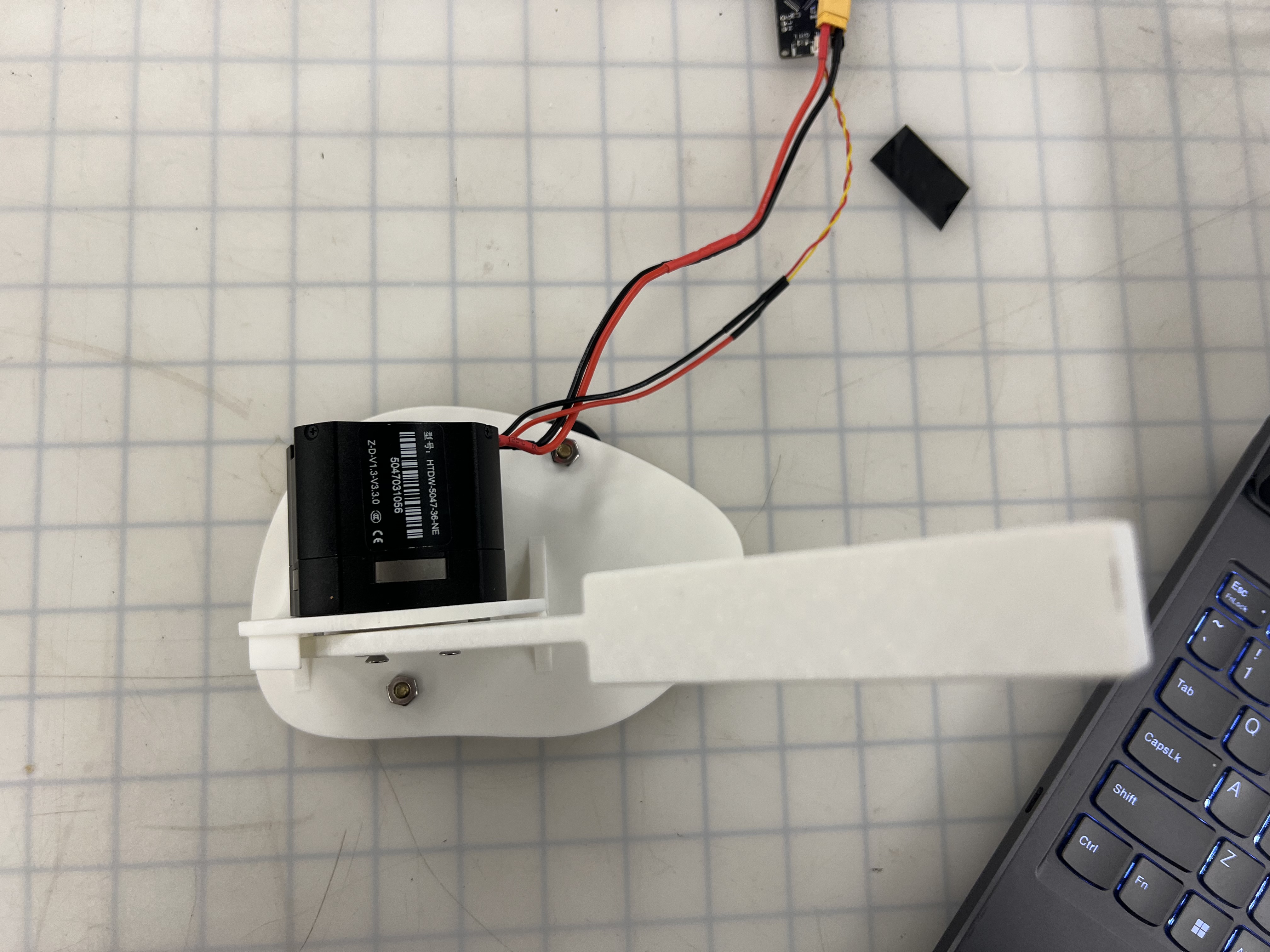

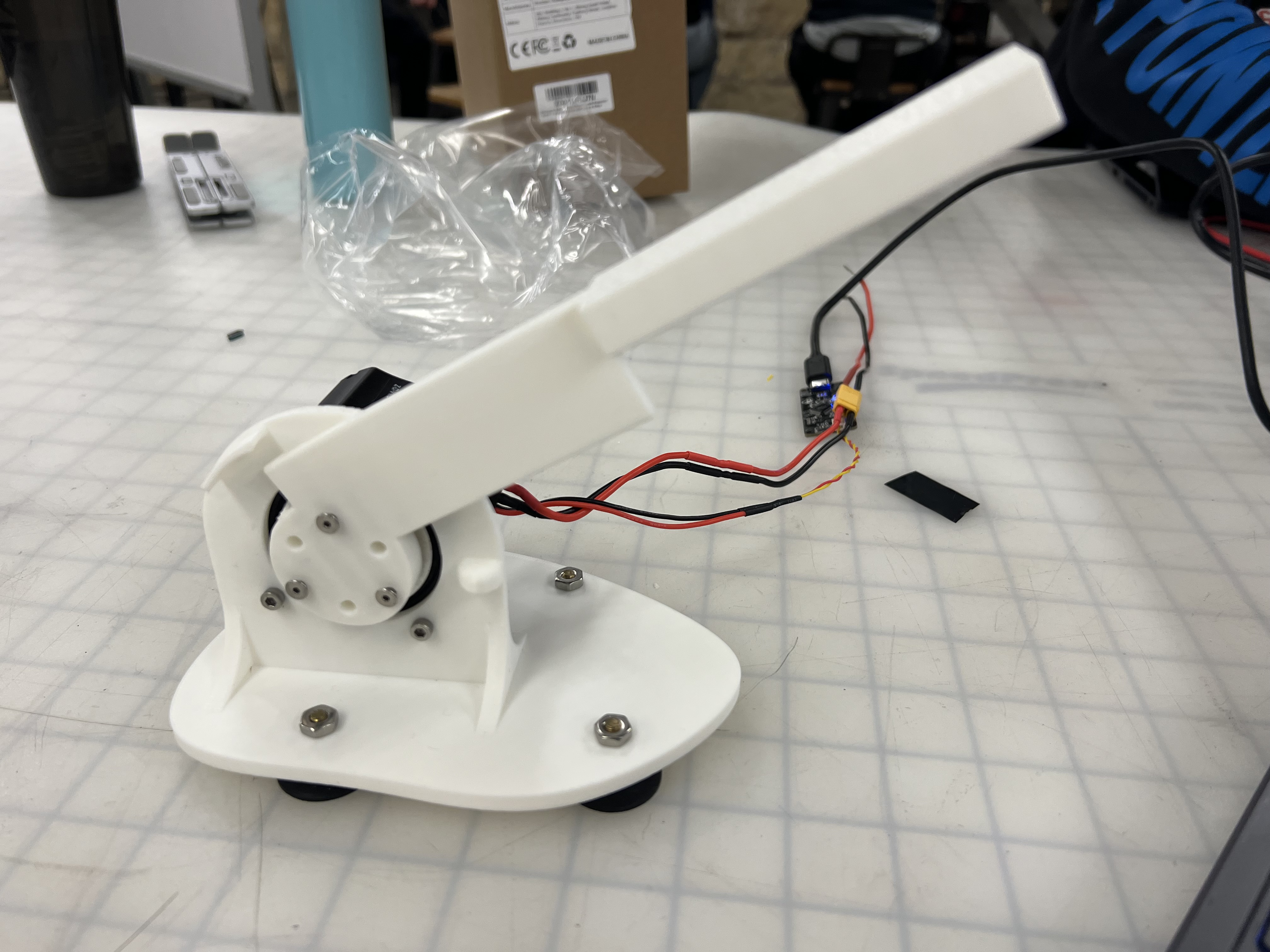

The haptic cutting device consists of several major components: a brush DC motor (Maxon 148877), an encoder (110513) (combined as part number 199554), a Hapkit control board (based on Arduino UNO), a custom stand, a custom knife handle, capstan drive cable with helical bolt, a BTS7960 motor driver, and a DC regulated power supply. The system can be divided into two main subsystems: mechanical and electrical.

In the mechanical subsystem, we modified the original Hapkit design by repositioning the suction cup base to be behind the rotational plane of the knife. This change was motivated by safety concerns, especially during testing with a direct-drive transmission. With direct drive, the knife can rotate continuously without colliding with the base—an important feature for tuning parameters like voltage, current, and PWM signal.

We initially selected the Maxon 148877 motor for its high stall torque of 2560 mNm at 48V and 42.4A, which satisfies the requirements for simulating cutting resistance. However, early direct-drive tests revealed several issues: the motor overheated, knife motion exhibited vertical oscillations ("bouncing"), and the haptic feedback was insufficient. Note that this instability may not stem solely from the transmission method.

As a result, we transitioned to a capstan drive system, adopting a mechanical design based on the Hapkit. A key improvement we made was adding screw threads to the capstan shaft to help guide the string and prevent it from overlapping. We also used a strong fishing line and increased the number of loops to generate higher friction, reducing slippage. This setup ensures that the string stays in place and can reliably transmit relatively large forces.

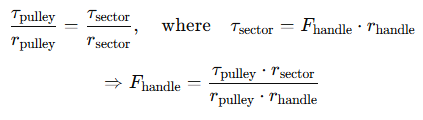

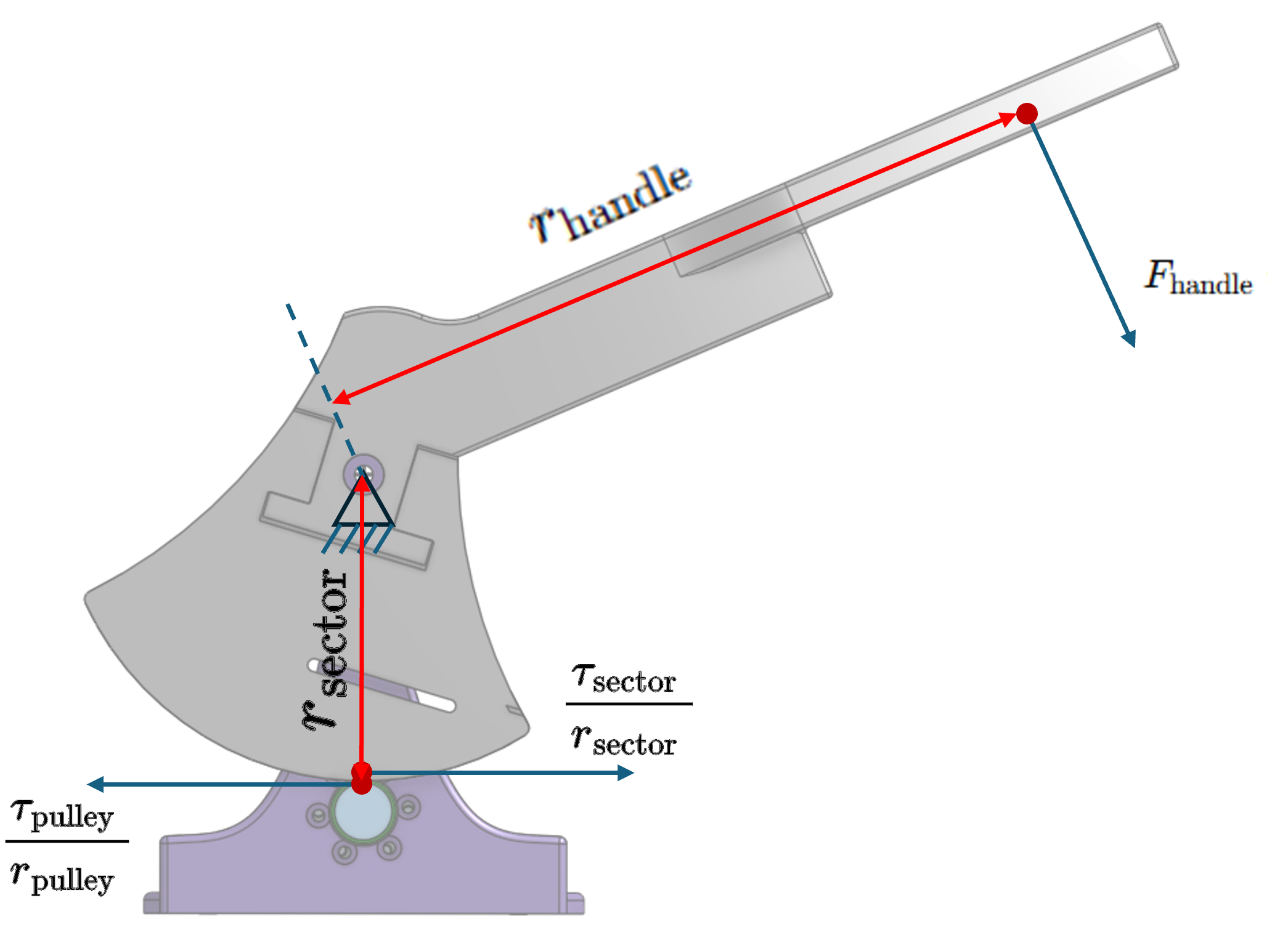

As shown in the diagram below, assuming the motor applies a constant torque tau_pulley to the pulley and the user applies a force F_handle perpendicular to the handle, the relationship is:

Mechanism design: force transmission analysis.

In contrast, under a direct-drive configuration:

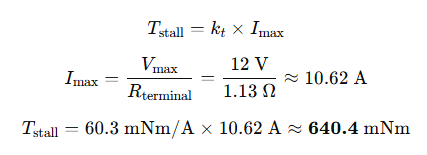

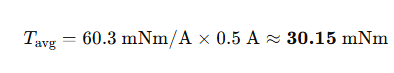

This implies a mechanical advantage of (r_sector / r_pulley) compared to direct drive. In our case, r_sector = 75 mm, r_handle = 150 mm, and r_pulley = 10 mm, resulting in a 7.5x amplification. For instance, a desired cutting force of 50 N at the handle requires the motor to produce a torque of 1000 mNm. When the motor is stalled, it behaves like a resistor, so the current is given by:

and outputs a stall torque of

In theory, the motor's maximum torque is limited by its stall current and torque constant:

This value is slightly below the torque needed to simulate real cutting forces without assistance. However, we employ a capstan drive with a 7.5× mechanical advantage, which allows us to amplify torque effectively.

In practice, we observed a peak current of about **0.5 A** on the power supply display, suggesting an average torque output of:

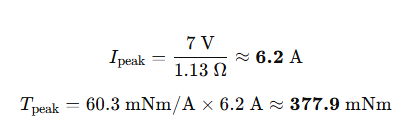

However, during short cutting bursts, the motor likely draws more current than shown on the panel. For instance, if the PWM signal is at 150 (≈7 V), the instantaneous current may reach:

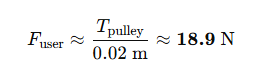

Through the capstan transmission (r_sector = 75 mm, r_handle ≈ 150 mm, r_pulley = 10 mm), this torque corresponds to a user-perceived force of:

This level of force is sufficient to create a realistic haptic cutting experience.

In the electrical subsystem, a BTS7960 motor driver is connected to both the power supply and the motor, and receives the PWM and enabling control signals from the Hapkit board to control the voltage of the motor while enabling large current to pass through.The Hapkit board receives the radius signals from the encoder and control the motor’s voltage by sending out PWM signals to BTS7960 control board.

Mechatronics design: hardware wiring.

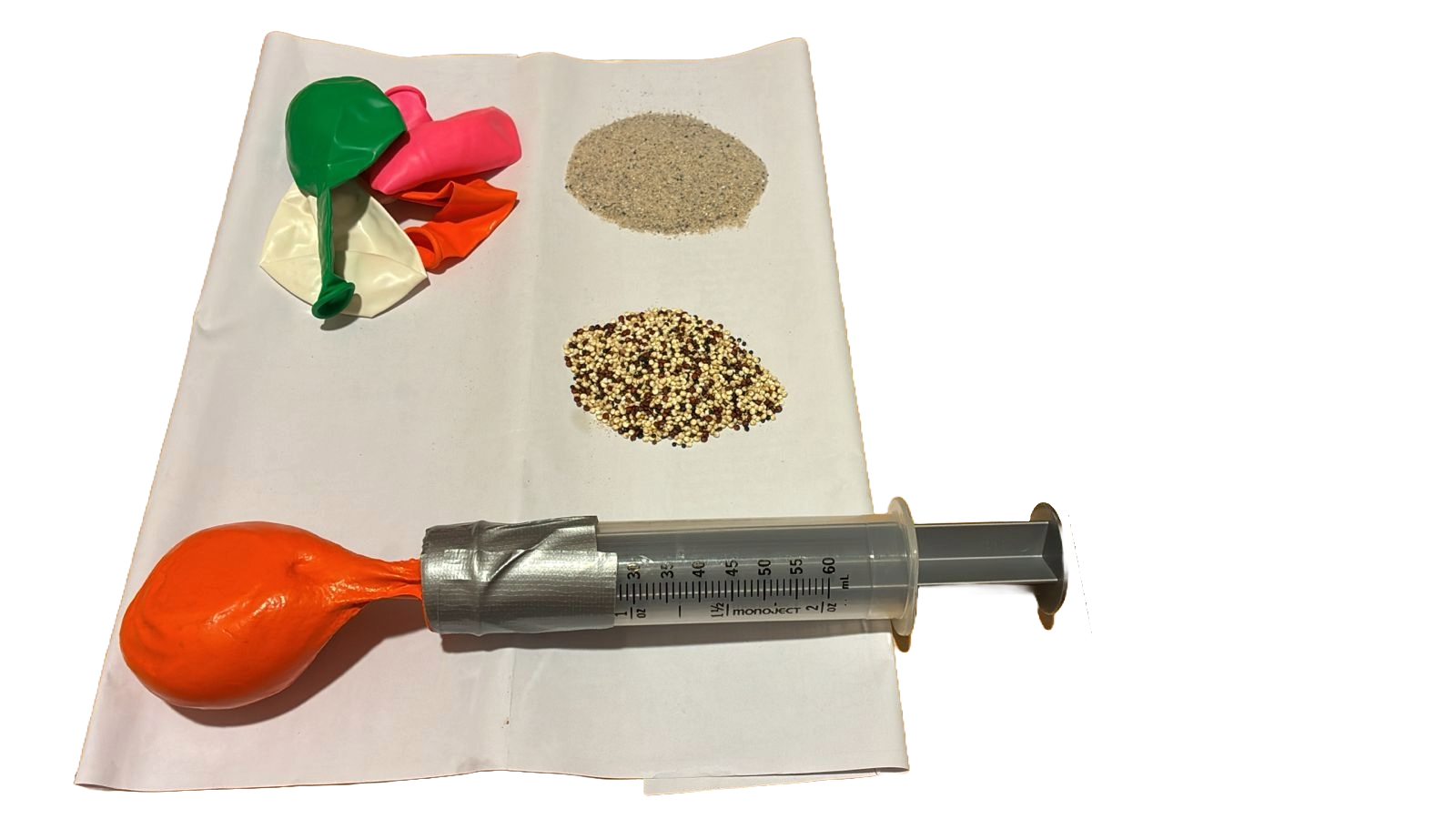

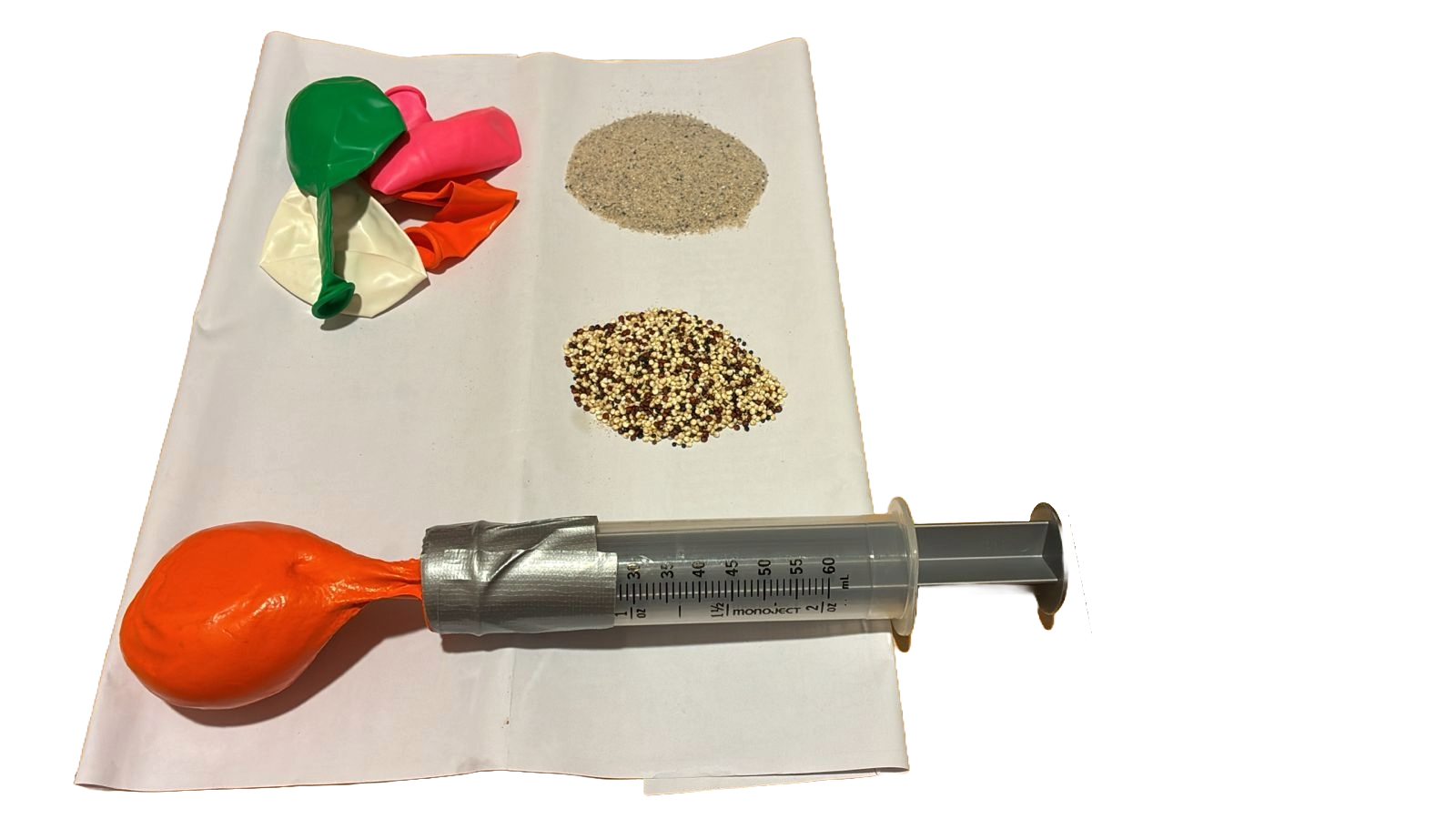

Our device also utilizes particle jamming to replicate the tactile experience of holding various vegetables/fruits. The setup involves a syringe with a 0-60 ml capacity for controlling particle jamming, paired with balloons(large syringe with mouth glued to the opening of the balloon to make it airtight. ) filled with two types of particles: sand and quinoa. By adjusting the amount of air and the amount of particles in the balloons, we can simulate the feel of different vegetables/fruits such as tomatoes, onions, avocados, and potatoes. When the air was pumped into the balloon, the stiffness of the balloon with sand decreases, resulting in a soft and springy experience. Otherwise the stiffness can be increased while we create the vacuum in the balloon, creating a rock-like experience.

Setup for particle jamming.

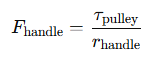

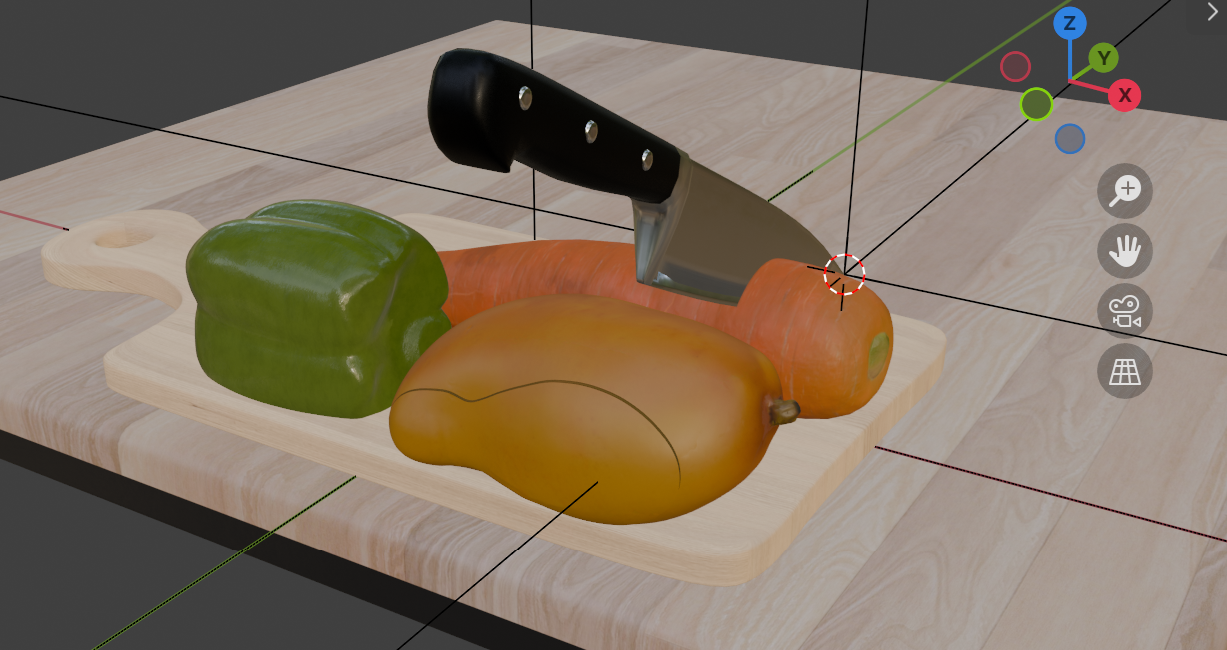

Lastly, we employed a VR headset which can align the virtual world frame with the real-world experiment setup so that the knife and ingredient that the user sees in the headset are perfectly in the spatial place in the real world, enhancing the haptic experience.

System Analysis and Control

Our haptic cutting system simulates the experience of slicing various fruits and vegetables using a motorized blade controlled by a torque-feedback actuator. At the core of this system is a physically-inspired model that divides the cutting experience into three sequential haptic zones. The first is Free Space, where the blade rotates without resistance before it makes contact with the object. This is followed by the Spring Zone, which represents the initial resistance from the fruit’s surface—akin to piercing the skin. This zone is modeled using a linear spring and damping, simulating the elastic and viscous qualities of the material. Finally, the system transitions to the Rupture Zone, where the blade breaks through the surface and enters the interior of the object. Depending on the simulated material, this zone may involve constant resistance, a sudden drop in torque, or a complete release.

Each simulated fruit or vegetable is characterized by a unique set of physical parameters. These include the spring stiffness K (N·m/rad), which determines how firm the material feels; the damping coefficient B (N·m·s/rad), which adds viscosity to the motion; and the Spring Zone Radius, which defines the angular distance through which the spring force is applied before rupture. These parameters drive a real-time torque profile that is applied to the blade motor, providing the user with dynamic, material-specific haptic feedback during the cutting process.

For the mango, a soft fruit, the haptic goal is to replicate the sensation of cutting through a smooth but fibrous interior. Its model includes a moderately long spring zone followed by a sustained, constant torque that mimics the resistance felt as a knife slides through the fruit’s dense flesh. In contrast, the carrot presents a very different tactile experience. With a high spring stiffness and a short spring zone, the carrot simulates a crisp, firm surface that yields quickly. After the initial resistance is overcome, the torque drops off, mimicking the internal transition to a less dense core. The bell pepper, which is hollow and springy, is modeled with a long, soft spring zone and low stiffness. Once the blade pushes through the outer skin, torque quickly drops to zero, creating the feeling of entering an empty, compliant cavity. This sudden release is essential for conveying the pepper’s distinctive structure.

These behaviors are reflected clearly in the torque vs. blade rotation plot. The x-axis represents blade rotation in degrees, scaled to match the physical resolution of our encoder system, while the y-axis shows applied torque. For the mango, the plot shows a smooth increase in torque followed by a flat plateau, indicating persistent cutting resistance. The carrot’s plot features a steep torque rise and a shorter spring zone, followed by a quick leveling-off at lower torque. The pepper’s curve rises gently to a peak and then drops sharply, indicating its elastic skin and hollow core. All profiles are clipped at 90° of blade rotation, consistent with the physical constraints of the system, ensuring torque is zeroed beyond the realistic cutting range.

Torque vs. blade rotation angle plot.

This approach allows a single actuator system to convey richly varied cutting sensations by tuning just a few physical parameters. The result is an intuitive, material-specific haptic experience that enables users to distinguish between different fruits and vegetables by feel alone—a key capability for immersive training, virtual cooking interfaces, or human-robot collaboration in food preparation.

Three push buttons are attached to pins 4, 5, 8. In the main loop each mode substitutes a different set of mechanical parameters before calling the torque model. No reboot is required - parameters take effect instantly, so the next twist of the handle feels like a different material.

Buttons for ingredient mode selection.

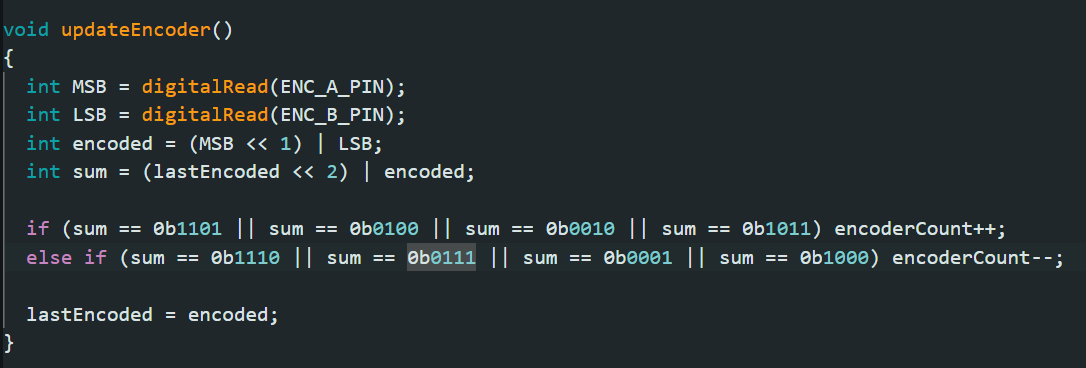

The encoder is wired to pins D2 and D3, each configured as INPUT_PULLUP and attached to the same ISR updateEncoder(), which fires on every level change. Inside the ISR, the current A/B levels are packed into a two-bit value encoded, merged with the previous state lastEncoded to form a four-bit Gray-code sequence sum; if sum matches one of the four forward patterns (1101 / 0100 / 0010 / 1011) it increments encoderCount, and if it matches one of the four reverse patterns (1110 / 0111 / 0001 / 1000) it decrements the counter, then stores lastEncoded = encoded for next time. In the main loop, interrupts are briefly disabled to copy encoderCount atomically; that count is multiplied by the constant RAD_PER_PULSE (radians per pulse) to obtain the current angle theta = -(count × RAD_PER_PULSE), and differenced over the elapsed microseconds to yield angular velocity omega. Thus every encoder edge is converted—without loss—into real-time angle and speed values for the torque-generation model.

Encoder input processing.

Results

Demo at the OpenHouse.

During the open house demonstration, users were invited to interact with our haptic cutting system, which simulated the tactile experience of slicing three different ingredients: mango, carrot, and green pepper. Each user wore a VR headset to align the virtual environment with the physical setup, held the cutting handle with their right hand, and grasped a balloon embedded with particle jamming material in the other hand.

The demonstration was conducted under supervision to ensure safe and consistent usage. Most users reported a clear distinction between the different ingredient types and were able to perceive variations in cutting resistance and balloon stiffness. Notably, several users expressed strong interest in the particle jamming component, commenting on the realistic changes in compliance when interacting with balloons representing different vegetables. Overall, the system received highly positive feedback regarding both its realism and engagement.

3D Blender model.

Our final system setup.

Future Work

Considering the feedback from our users during the open house and combining our design ideas, we hope to focus on four points in the future:

1. Improve the existing cutting experience of three kinds of vegetables. For example, adding feedback force when the knife is at the bottom of green pepper.

2. Add more ingredients with complex feedback, such as meat.

3. Add a new degree of freedom (front and back) to the handle. So that users can experience a more perfect cutting process.

4. Increase torque output to better simulate real cutting forces, and experiment with balloon sizes to enhance realism in hand-held object representation.

After completing these changes, we plan to conduct more user tests to further evaluate the authenticity of the equipment to simulate real-life cutting. In addition, we encourage future teams to study more suitable transmission structures to replace existing fishing lines. This can greatly improve the stability of the system.

Acknowledgments

We want to thank the teaching team of ME327, for all their assistance in our design and offering available equipment.

Files

Code and drawings should be linked here. You should be able to upload these using the Attach command. If you aren't willing to share these data on a public site, please discuss with the instructor. Also, in this section include a link to a file with a list of major components and their approximate costs.

Code, CAD, Blender files: https://github.com/ttyaaron/ME327_Haptic_Cutting

Major components and costs:

References

[1] M. Mahvash and V. Hayward, “Haptic rendering of cutting: A fracture mechanics approach,” The International Journal of Robotics Research, vol. 28, no. 3, pp. 355–375, 2009.

[2] N. Nakazato and T. Kano, “Proposal for a virtual reality system to practice knife handling in cooking,” in 2024 IEEE 13th Global Conference on Consumer Electronics (GCCE), IEEE, 2024.

[3] E. Gundogdu, X. Xu, D. Pathak, and M. Hebert, “Leveraging multimodal haptic sensory data for robust cutting,” in Proceedings of Robotics: Science and Systems (RSS), RSS Foundation, 2021.

Appendix: Project Checkpoints

Checkpoint 1

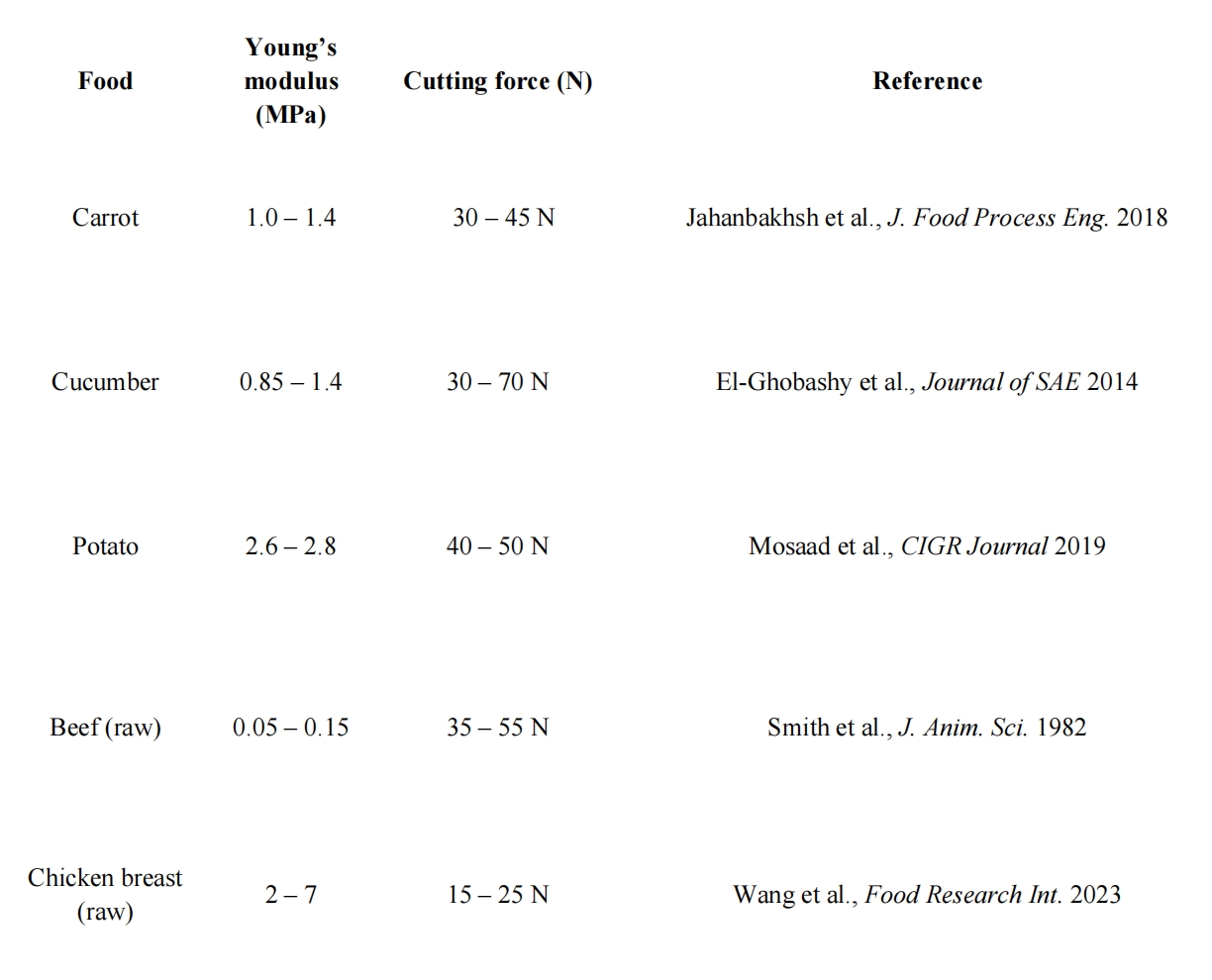

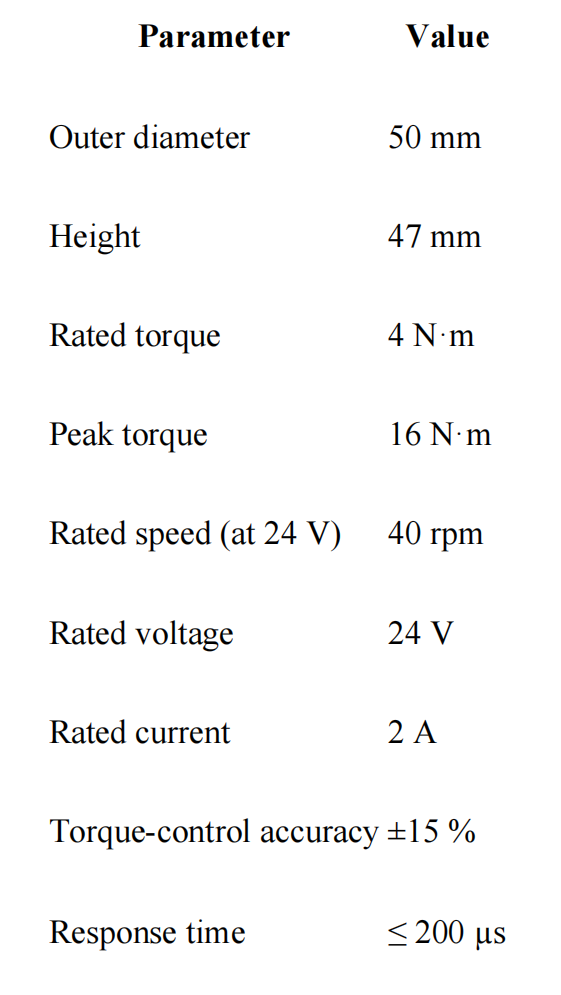

1. Material modeling: Obtain the Young’s modulus and cutting-force data for five ingredients(carrot, cucumber, potato, raw beef, raw chicken breast), here is the table.

2. Control parameters: Our basic idea is to use PD control to give feedback on the handle. The following are the control parameters corresponding to various materials.

3. Actuator and sensor systems: We will use a 24V DC motor to provide torque to simulate the tactile feeling of a kitchen knife cutting food. Motor data:

We will use the Hapkit board, position and velocity sensing provided by the motor to implement the control system.

4. CAD Design of the knife:

We are making adjustments to the design to make fabrication easier and the structure stronger, and is planning to 3D-print the parts over the weekend.

Checkpoint 2

1. 3D printed Demo

2. Haptic Rendering - Holding Effect

This experiment utilizes particle jamming to replicate the tactile experience of holding various vegetables. The setup involves a syringe with a 0-60 ml capacity for controlling particle jamming, paired with balloons filled with two types of particles: sand and quinoa. By adjusting the amount of air and the amount of particles in the balloons, we can simulate the feel of different vegetables such as tomatoes, onions, avocados, and potatoes. The goal is to investigate how particle jamming can mimic the softness, texture, and stiffness of these vegetables, providing insights into how materials can be manipulated for haptic feedback.

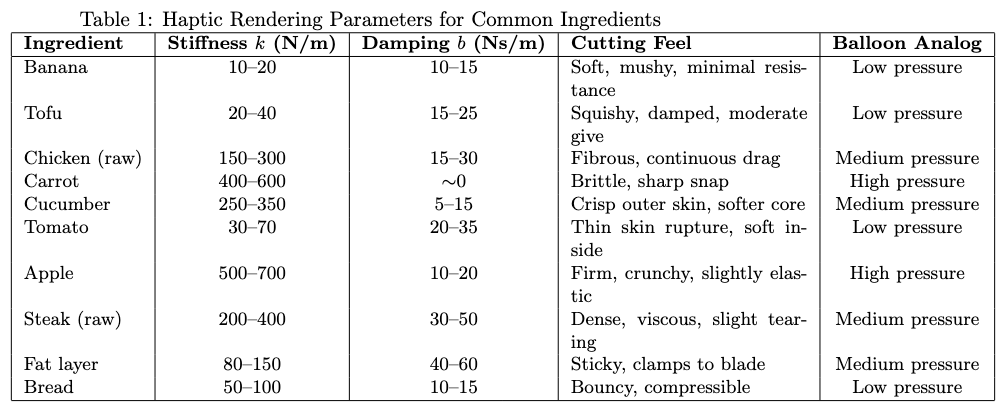

3. Knife Rendering Parameters

This table describes the stiffness and damping parameters we can use to render the cutting of different food items. These parameters were inspired by the prior work listed in our literature review. Higher stiffness values are associated with stiffer and crunchy food items. Lower stiffness but greater damping is associated with softer and fatty food items. This table also outlines the pressure of the balloon analog for the user to feel with their second hand. Higher pressure is associated with harder food items while lower pressure is for softer food items.

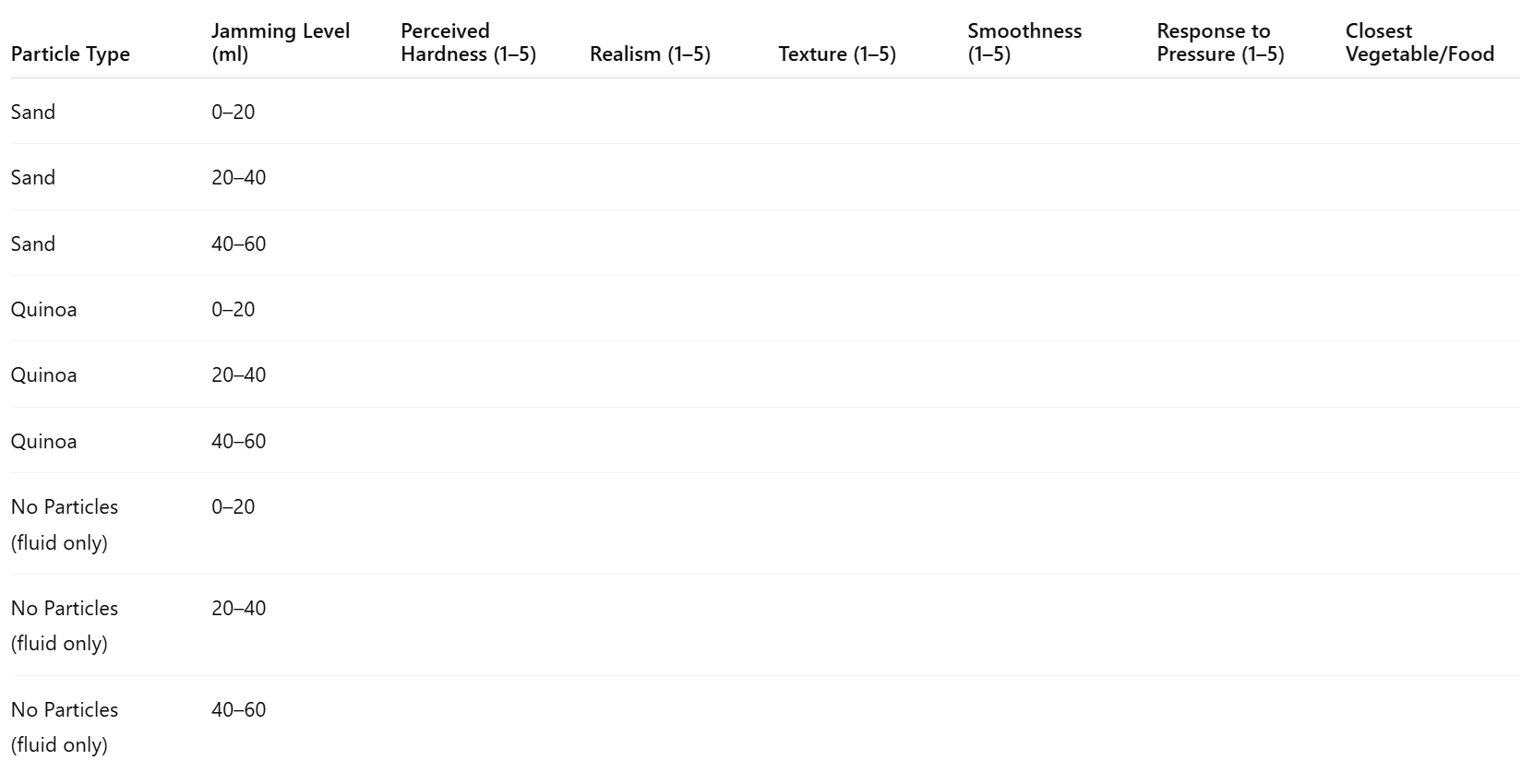

4. User Survey Table: Mapping Material Stiffness (Jamming) to Perceived Effect

Assign the closest vegetable/food option from: Tomato, Potato, Avacado, Meat, Onion, Fatty Tissue

5.Code and Algorithm

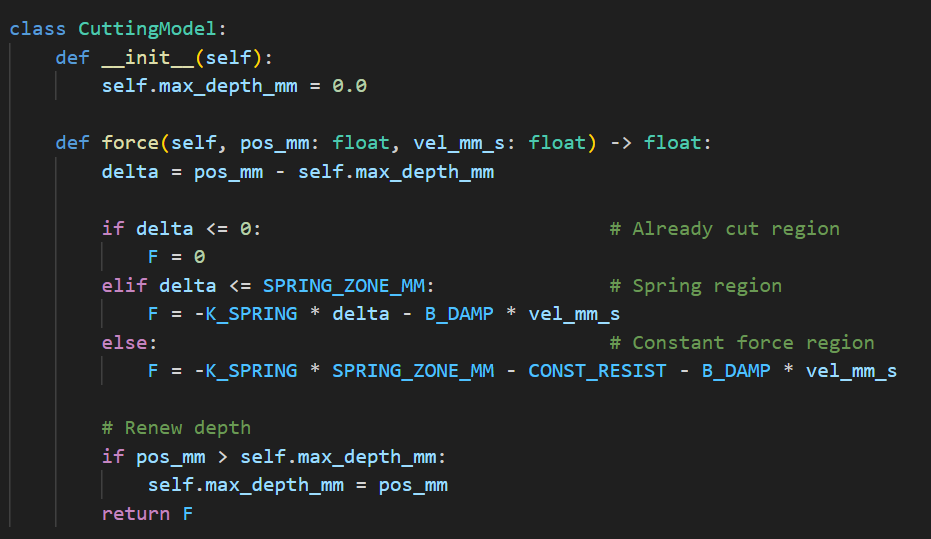

This code is currently used to calculate the force feedback from the handle. It is divided into three situations, when the blade is in the current maximum cutting position; The blade exceeds the maximum cutting position, but it is in the elastic region; The cutting edge exceeds the maximum elastic region. These three situations will make the handle provide: 0n; Spring-like force; Fixed force. At the same time, the latter two will also have a damping.